Tutorial

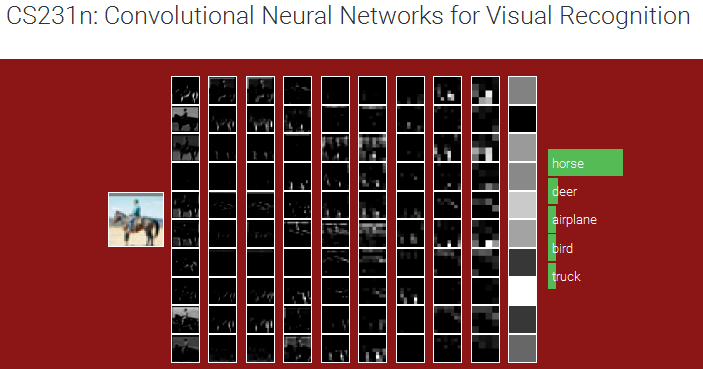

[ ]Convolutional Neural Networks for Visual Recognition

This course is offered by Stanford as CS231n. Computer Vision has become ubiquitous in our society, with applications in search, image understanding, apps, mapping, medicine, drones, and self-driving cars. Recent developments in neural network (aka “deep learning”) approaches have greatly advanced the performance of these state-of-the-art visual recognition systems. This course is a deep dive into details of the deep learning architectures with a focus on learning end-to-end models for these tasks, particularly image classification. During the 10-week course, students will learn to implement, train and debug their own neural networks and gain a detailed understanding of cutting-edge research in computer vision. The final assignment will involve training a multi-million parameter convolutional neural network and applying it on the largest image classification dataset (ImageNet). We will focus on teaching how to set up the problem of image recognition, the learning algorithms (e.g. backpropagation), practical engineering tricks for training and fine-tuning the networks and guide the students through hands-on assignments and a final course project. Much of the background and materials of this course will be drawn from the ImageNet Challenge.

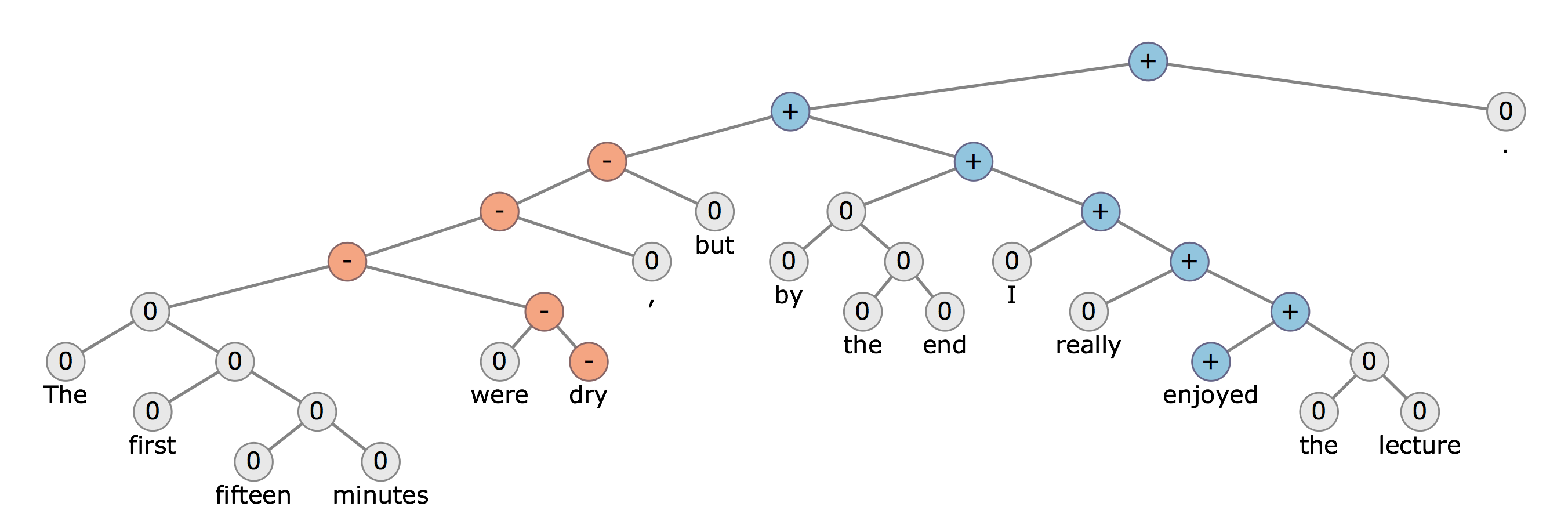

Deep Learning for Natural Language Processing

This course is offered by Stanford as CS224d. Natural language processing (NLP) is one of the most important technologies of the information age. Recently, deep learning approaches have obtained very high performance across many different NLP tasks. These models can often be trained with a single end-to-end model and do not require traditional, task-specific feature engineering. In this spring quarter course students will learn to implement, train, debug, visualize and invent their own neural network models. The course provides a deep excursion into cutting-edge research in deep learning applied to NLP. The final project will involve training a complex recurrent neural network and applying it to a large scale NLP problem. On the model side we will cover word vector representations, window-based neural networks, recurrent neural networks, long-short-term-memory models, recursive neural networks, convolutional neural networks as well as some very novel models involving a memory component. Through lectures and programming assignments students will learn the necessary engineering tricks for making neural networks work on practical problems.

Digital Photography

This course is made availalbe by Professor Emeritus of Computer Science at Stanford and now Principal Engineer at Google Marc Levoy.

An introduction to the scientific, artistic, and computing aspects of digital photography. Topics include lenses and optics, light and sensors, optical effects in nature, perspective and depth of field, sampling and noise, the camera as a computing platform, image processing and editing, and computational photography. We will also survey the history of photography, look at the work of famous photographers, and talk about composing strong photographs.