Tag: gan

A Generative Adversarial Network (GAN) is a machine learning technique that consists of two neural networks, the generator and the discriminator, engaged in a game-like framework. The generator's role is to create synthetic data, such as images or text, while the discriminator's task is to distinguish between real data and the generated data. They iteratively improve each other: the generator tries to produce data that is indistinguishable from real data, and the discriminator gets better at telling them apart. This adversarial process results in the generator producing high-quality, realistic data, making GANs widely used for tasks like image generation, style transfer, and data augmentation.- Raising the Cost of Malicious AI-Powered Image Editing (03 Oct 2023)

This is my reading note for Raising the Cost of Malicious AI-Powered Image Editing. This paper proposes a method to stop an image being edited by on diffusion model. The method is based on adverbial attack: learn a perturbation to the target image such that the model (encoder or diffusion) will generate noise or degraded image. However this method may not always work or may fall when the model changes.

- Diff-Instruct A Universal Approach for Transferring Knowledge From Pre-trained Diffusion Models (28 Aug 2023)

This is my reading note on Diff-Instruct: A Universal Approach for Transferring Knowledge From Pre-trained Diffusion Models. The paper explains the theory of using a pre-trained diffusion model to guide the training of a generator model.it shows that both DreamFusion and GAN are a special case of it: score distillation sampling (SDS) from DreamFusion uses Dirac distribution to represent the generator while GAN learns a discriminator to represents the distribution of data. To this end, it proposes IKL, which is tailored for DMs by calculating the integral of the KL divergence along a diffusion process (instead of a single step), which we show to be more robust in comparing distributions with misaligned supports.

- Efficient Geometry-aware 3D Generative Adversarial Networks (27 Aug 2023)

This is my reading note on Efficient Geometry-aware 3D Generative Adversarial Networks. EG3D proposes a 20 to 3D generate method base style gan and triplane based nerf. The high level idea is to use style gan to generate triplane, which is then rendered into images. The rendered image is the discriminated to the input images at two resolutions. The camera pose is also required to generate the triplane.

- Efficient Geometry-aware 3D Generative Adversarial Networks (25 Jul 2023)

This is my reading note for Efficient Geometry-aware 3D Generative Adversarial Networks. The paper proposes a 2Dto 3D generate method base style GAN and triplane based NERF. The high level idea is to use style GAN to generate triplane, which is then rendered into images. The rendered image is the discriminated to the input images at two resolutions. The camera pose is also required to generate the triplane.

- Advancing Example Exploitation Can Alleviate Critical Challenges in Adversarial Training (17 Jul 2023)

This is my reading note for Advancing Example Exploitation Can Alleviate Critical Challenges in Adversarial Training. The paper proposes a simple method to improve performance of adversarial learning. It’s based on the observation that some samples has impacts to robustness but not accuracy; Vice verse. Thus it propose a method to adjust the weight of samples according.

- DreamBooth Fine Tuning Text-to-Image Diffusion Models for Subject-Driven Generation (14 Jul 2023)

This is my reading note for DreamBooth: Fine Tuning Text-to-Image Diffusion Models for Subject-Driven Generation. This paper proposes a personalized method for text to image based on diffusion. To achieve this, it firsts learn to align the visual content to be personalized to a rarely used text embedding, then this text embedding will be insert to the text to control the image generation.

- ELECTRA Pre-training Text Encoders as Discriminators Rather Than Generators (09 Jul 2023)

This is my reading note ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators. This paper proposes to replace masked language modeling with the discriminator task of whether the token is from the authentic data distribution or fixed by the generator model. Especially the model contains a generator that’s trained with masked language modeling objects and discriminator to classify whether a token is filled by the generator or not.

- Blended Latent Diffusion (06 Jul 2023)

This is my reading note for Blended Latent Diffusion. The major innovation of the paper is to apply mask in latent space instead of image space to reduce boundary inconsistency, as the foreground is generated from the VAE but the background is not. in addition to handle the thin detail of mask got lost due to downs sample step, it dilate the mask first.

- Blended Latent Diffusion (05 Jul 2023)

This is my reading note for Blended Latent Diffusion. The major innovation of the paper is to apply mask in latent space instead of image space to reduce boundary inconsistency, as the foreground is generated from the VAE but the background is not. in addition to handle the thin detail of mask got lost due to downs sample step, it dilate the mask first.

- MeshDiffusion Score-based Generative 3D Mesh Modeling (02 Jul 2023)

This is my reading note for MeshDiffusion: Score-based Generative 3D Mesh Modeling. This paper represents the 3D mesh as a reformed tetrahedral which is defined on a regular 3D grid with 4 channel features: 3D positional deformation of the vertex and signed distance function values to define the surface.

- GIRAFFE Representing Scenes as Compositional Generative Neural Feature Fields (25 Sep 2022)

This is my reading note for GIRAFFE: Representing Scenes as Compositional Generative Neural Feature Fields. The paper aims to provide more control to 3D object rendering NeRF. For example moving the objects in the 3D scene, adding/deleting objects and so on. To acheive this, GIRAFFE proposed to model the objects and background in the scene separately and then composite together for the rendering. In addition, different from NeRF, GIRAFFE uses a learned discriminator instead of L2 or L1 loss as loss function, thus it is a GAN.

- Stable Diffusion (23 Sep 2022)

This is my 2nd reading note on diffusion model, which will focus on the

stabe diffusion, aka High-Resolution Image Synthesis with Latent Diffusion Models. By decomposing the image formation process into a sequential application of denoising autoencoders, diffusion models (DMs) achieve state-of-the-art synthesis results on image data and beyond. However, as mentioned in diffusion, DM sufferes high computational cost. The proposed Latent Diffusion Models (LDM) reduces the computational cost via latent space and introduces cross-attention to enable multi-modality conditioning. - Diffusion Model (22 Sep 2022)

This is my 1st reading note of on recent progress of difussion model. It is based on Diffusion Models: A Comprehensive Survey of Methods and Applications. Diffusion probabilistic models were originally proposed as a latent variable generative model inspired by non- equilibrium thermodynamics. The essential idea of diffusion models is to systematically perturb the structure in a data distribution through a forward diffusion process, and then recover the structure by learning a reverse diffusion process, resulting in a highly flexible and tractable generative model.

- My Paper Reading List for 3D Face Reconstructions (20 Mar 2021)

Here is my paper reading lsit for 3D face reconstructions based on Papers with Code. 3D face reconstruction is the task of reconstructing a face from an image into a 3D form (or mesh). Most of the papers on the list are between 2017~2020.

- Must-read AI Papers (16 Feb 2021)

I will create a new reading note series based on Must-read AI Papers from Crossminds.ai.

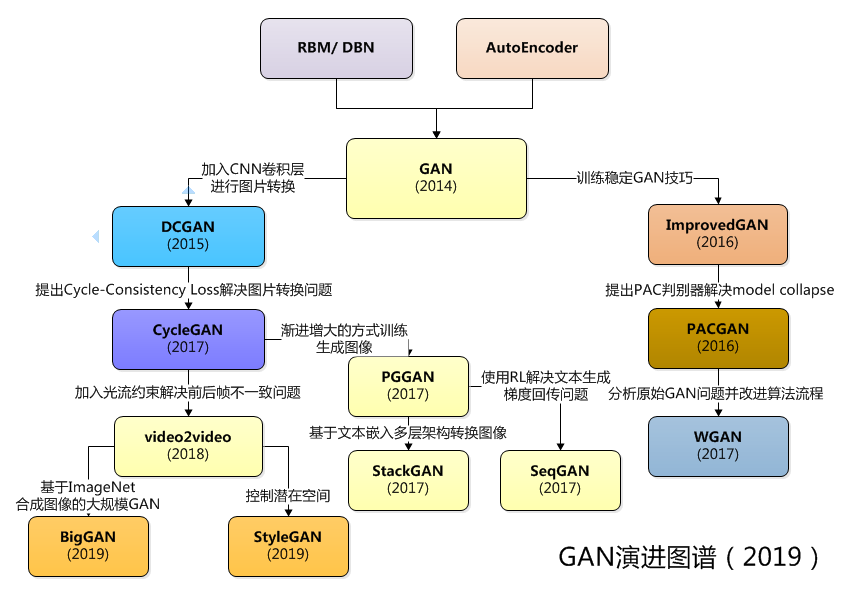

- GAN Roadmap (07 Sep 2019)

This post is baesd on AlphaTree

- Generative adversarial network (05 Apr 2019)